For the last 20 years, analytics was designed around clicks.

You chose a dataset, picked fields, applied filters, and assembled charts and dashboards. If you wanted an answer, you had to know how the system worked well enough to ask the question correctly. That world made sense when analytics tools could only respond to structure.

Now we’re handing people a blank text box to ask AI.

Analytics for the AI era doesn’t look like analytics. It isn’t built for humans clicking through interfaces anymore. It’s built for systems that can respond directly to how humans think.

That single change breaks almost everything we’ve assumed about analytics.

When users can start with intent instead of schema, the job is no longer to help them navigate data. It’s about helping machines interpret human language, context, and ambiguity at scale.

And it doesn’t stop there. The next step used to be the real work — gather data, then analyze, understand, interpret. AI can often take the next step directly for the user.

Search used to mean typing keywords, then scanning links to answer the real question. Now, you just ask your question and get answers. Data is becoming the same.

That changes where the work begins, what information matters, and what “good” analytics means.

Legacy BI vs AI analytics #

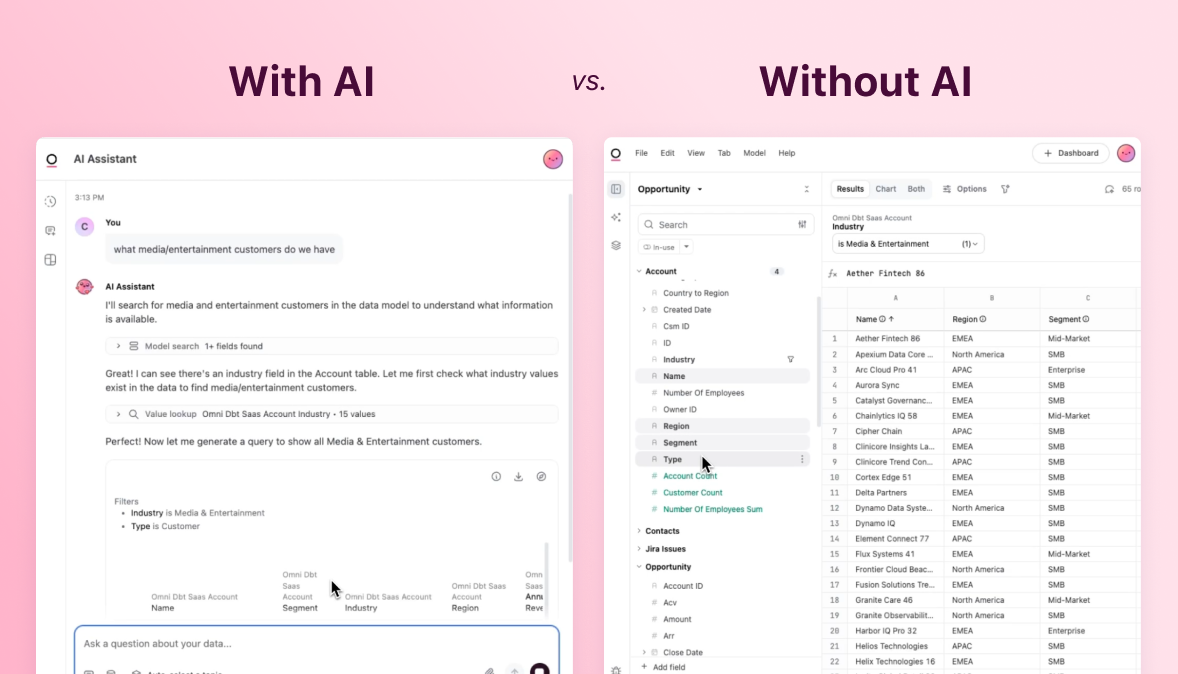

Traditional analytics forces you to make several decisions before you can even ask your question. Pick a dataset. Select fields. Figure out which joins matter. You slowly build toward the question you want to ask.

If you’ve done this for years, it feels “normal.” If you haven’t, it feels impossible. This is why the promise of self-service BI still breaks down.

Most people don’t struggle with data because they lack curiosity. They struggle because knowing what you want is very different from knowing how to get there. The focus has always been on teaching people how to use tools instead of how to ask questions.

AI lets people start with the question.

You don’t need to understand the structure of the data or the tool; it’s all about your intent. What do you want to know? “How is the business doing this quarter?” “Why did pipeline change?” “Which customers should I worry about?”

These aren’t analytical queries. They’re human questions. They’re imprecise and often ambiguous. They’re also the kinds of questions analysts receive every day. Signals of what someone is actually trying to understand, long before it’s translated into fields, joins, or a dashboard.

Think about the question “What did we close this quarter?” When I used to ask Omni, it would return opportunities that were both closed won and closed lost.

This result is technically correct, but practically wrong. In the database, “closed” means something very specific. But humans always mean “won,” we just never had to write it down anywhere because analysts know to assume our intent.

That’s when I realized it wasn’t a modeling problem. AI for data is a language problem.

Traditional BI tries to solve language gaps by forcing people to learn the schema. The tool puts up guardrails and forces users to click through the UI and follow the rules of the tool. AI requires the opposite approach. Prompts are the new UI; you don’t need to understand the schema to ask what you want.

And because AI thrives with overwhelming and messy inputs, it can tackle what would be impossible for humans to do in traditional BI. Wide tables with 200 columns, inconsistent naming, huge text blocks? To an analyst, that’s a nightmare. It’s work you’d actively try to avoid for as long as you could. But for AI, it’s no problem. You don’t have to narrow the scope; you can go as wide as you want.

But, it doesn’t just work on its own.

Designing for AI analytics #

You can’t simply point an LLM at your data and expect it to know when you asked for “closed” you also mean “won” or that when you ask for “orders” you don’t want to include “returns” — or maybe you do want to include them?

Every business speaks its own language, and the understanding of that language doesn’t live in columns, but in context.

When you hire a new data analyst, you don’t just hand them the keys to tools and wish them luck. You explain how the business works, how you measure things, and which numbers are trusted vs. the ones still up for debate. You teach them all the things that never quite made it into documentation.

If you want AI to be useful, you have to do the same thing. An LLM doesn’t know what you mean on day one. That context has to be taught.

At first, this felt uncomfortable to me as someone who was raised in finance and loves statistics. I was trained to avoid overfitting, to never train the test set or bake in assumptions.

With AI analytics, overfitting is exactly what you want. This is why context, and the experts who have it, matter more than ever.

You’re not training a predictive model. You’re teaching shared meaning. When someone says “closed deals,” you want the system to know they mean wins. When someone says “quarter,” you want to know whether that means fiscal or calendar. When someone says “renewal,” you want to know what that actually includes in your business.

Learning from the reality of your business will beat perfect modeling every time, because perfect data doesn’t exist. Businesses change too much.

Once we realized this, we started encoding our intent directly in Omni to teach our product how we speak. We added an ai_context field into our semantic layer to give us a new space to add all the specific and messy ways we speak.

Then we monitored AI output, and every time it got something wrong, we fixed it. Not just in that isolated query, but in the model so that every interaction improves AI output for everyone.

This is critical. Reliable AI isn’t a checkbox; it’s a process, and human involvement can’t be overlooked. This is where I think we’re far more useful now.

The results may seem simple, but it makes me so happy. You ask what you want to know, and you get back an answer that understands what you meant.

Today, most of my own analysis starts with a question typed in plain language. They’re usually shorthand and most certainly include typos. I can do it the old way, but I don’t want to do more work than I have to.

I let the system do the work first. Find the right fields, make the joins, apply details, scan far, far too many fields. Then, I come in to react, tweak, and go deeper.

I spend less time setting things up and more time focusing on what matters and what to do next. I love that AI lets us be lazy in a good, more productive way.

Building the habit #

You have to build for it.

The teams getting the most value from AI are doing the unglamorous work. They’re naming things clearly, adding synonyms, watching where answers miss, and fixing the underlying context so the next question gets better results.

This has quietly become a new part of analytics work. Less about building dashboards, more about teaching systems how your business actually thinks. You can call it analytics context engineering, but what really matters is building the habit. We do this on our own data every day, and data teams at customers like Cribl, Heidi, and Photoroom are doing this too because AI is a process.

You still need to think about… | You need to think differently about… |

|---|---|

Data model | How you describe things. Treat it like it’s for humans, add synonyms, descriptions, etc. |

Security & permissions | Testing & tuning; it has to be iterative. AI is a process, not a snapshot. |

All the analytics functions — AI doesn’t just solve for these; you need to teach it | Monitoring so you learn from intent data — this data is magic. |

Analytics for the AI era doesn’t look like analytics because the job has changed. It’s no longer just about building dashboards. It’s about building shared understanding so machines can handle the boring parts and people can focus on what actually matters.